Every token, everywhere, all at once

Disclosure: Amplify is an investor in Inception.

At Stanford there is a course called Deep Generative Models. Were you to enroll, you would learn about the whole spectrum of algorithms and probabilistic methods for generating text, images, audio, and more. The professors would start with the simplest, most inefficient algorithms and architectures, gradually moving towards more complex, efficient solutions as the semester went on. Sort of like a textbook.

Since 2018, when professors Stefano Ermon and Aditya Grover taught the first iteration of this course, the world has exploded with incredibly powerful LLMs. Each month (or week) yields a new release from a megalab beating benchmarks and so on and so forth. Given how successful these models have become, you’d reasonably guess that they make use of rather complex, advanced techniques from this “textbook” of algorithms for generative models. Surely underlying these incredible machines is incredible algorithmic depth.

But you’d have guessed wrong! Despite autoregression being perhaps the most inefficient possible way to generate text, it has formed the foundation of all of today’s most popular and widely adopted LLMs. Nearly all SOTA models today generate tokens one at a time, the most basic and poorly optimized way to do their job. Curious indeed.

Now if all of this is true, a reader might naturally wonder…what’s on the other end of the spectrum? What’s the most efficient, cutting-edge way to generate data? That would be diffusion. Instead of generating tokens sequentially, the model works its way to the final answer all at once, like a digital sculptor from a block of marble embeddings. Diffusion is how basically all image and video models work today (like Runway), and is starting to finally make its way into text too.

The promise of diffusion – this most efficient but complex method of generation – is bonkers speed. And indeed, the leading diffusion LLM, Mercury 2, is undoubtedly the world’s fastest reasoning language model. It produces 1,009 tokens/sec on NVIDIA Blackwell GPUs at quality that’s competitive with leading speed-optimized models. This is 10x faster than Haiku 4.5, so fast that as a user you start to feel like the model’s responses are almost instantaneous.

Behind this beast of a model is Inception, cofounded by none other than Stefano (CEO) and Aditya (CTO), plus their longtime collaborator Volodymyr Kuleshov (Chief Scientist). This all-star academic team has their fingerprints on much of the history of diffusion models, and had to solve several major challenges to get diffusion to work with the discrete medium of text.

Based on extensive interviews with the Inception team, this post will tell the story of diffusion’s origins, how it made its way to language, and what it took to get it production-ready for Mercury and Mercury 2:

- A brief (academic) history of diffusion models

- Bringing diffusion models to text

- Taking text diffusion models from academia to production

- Inception, Mercury 2, and dLLMs of the future

Click on any section to navigate straight here if you’re into the whole brevity thing.

A brief (academic) history of diffusion models

To understand how we got to diffusion models for text, you need to understand how we got to diffusion models for images.

You could reasonably argue that this all started in 2015 with Sohl-Dickstein et al.’s Deep Unsupervised Learning using Nonequilibrium Thermodynamics. The paper explores a new method for training a generative model through diffusion:

“The essential idea, inspired by non-equilibrium statistical physics, is to systematically and slowly destroy structure in a data distribution through an iterative forward diffusion process. We then learn a reverse diffusion process that restores structure in data, yielding a highly flexible and tractable generative model of the data. This approach allows us to rapidly learn, sample from, and evaluate probabilities in deep generative models with thousands of layers or time steps, as well as to compute conditional and posterior probabilities under the learned model.”

This was probably the birth of diffusion as far as deep learning is concerned, but it was viewed mostly as an impractical, if academically interesting, idea. At the time, state of the art for generating images was GANs, or Generative Adversarial Networks. They were promising but painfully slow and hard to work with.

Around this time is also when our protagonists first met. In 2016, Stefano was running a lab at Stanford. It is also when Aditya started his PhD at said lab to start exploring the open space around generative models and probabilistic modeling. Meanwhile, Volodymyr was also a PhD student at Stanford, but in another lab working mostly on computational bio. He eventually joined Stefano’s lab as a postdoc in 2017…which means this team has been working together for close to 10 years. Ideas around diffusion and generative models were circulating, but it was ultimately a niche discipline with a small community around it.

Niche as it was, the community continued to make progress. In 2019, Generative Modeling by Estimating Gradients of the Data Distribution from Stefano and Yang Song introduced the idea of score-based generative modeling. Instead of trying to model the probability distribution of your data directly, the key idea was to learn the score function: the direction in which data becomes more likely. This paper was one of the first to seriously challenge the dominance of GANs, showing that an alternative generative paradigm could produce highly competitive results. The idea was rapidly picked up by the research community and became one of the key conceptual foundations for modern diffusion methods.

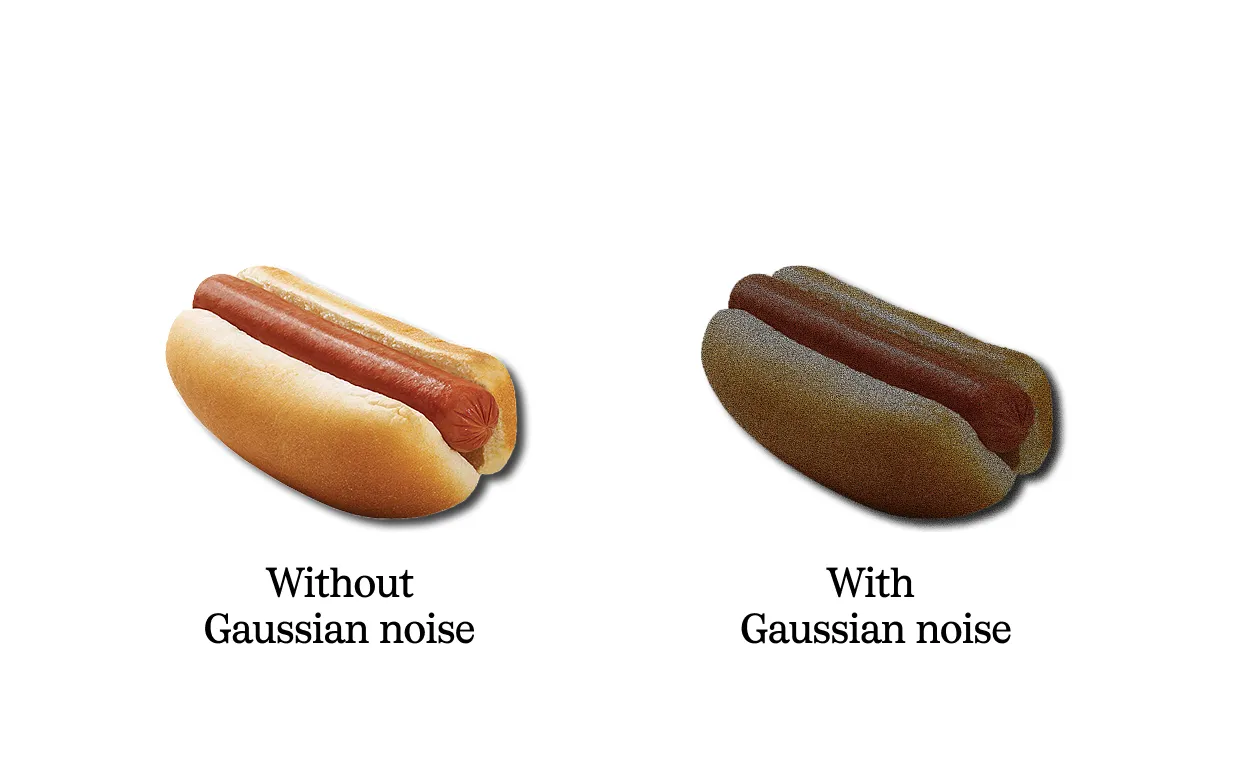

The way these new types of generative models worked is through the idea of noise. To train a model to generate an image, you essentially teach it in reverse. You start with an image, and then gradually add Gaussian noise to it in the form of a random small number added to each pixel. This is done repeatedly over some N number of steps – called a forward process – until the image is basically complete noise and every pixel is a random number. You then train a model to undo this noise, and predict what the original image was, which is the direction in which data becomes more likely (the score function).

Early diffusion-style models were an exciting proof of concept, but they were way too slow to work in practice. Sampling could require hundreds or even thousands of steps, which meant generating a single image could take minutes. That same year, Stefano’s lab authored another paper introducing DDIM, which showed how to reduce the number of sampling steps dramatically, bringing it down toward 20 to 50 while preserving quality. This was one of the key breakthroughs that made diffusion start to look practical.

But diffusion for images really broke into the mainstream when Rombach et al. introduced Latent Diffusion Models. This paper introduced two critical ideas:

First, they figured out how to compress the space that these diffusion models were operating in. Instead of working through pixels – a massive mathematical space that could take hundreds of GPU days to train on – they proposed a method to instead use the latent space generated by a class of pre-trained autoencoders. These autoencoders were trained to find an optimal balance between reducing the complexity of the pixel space and preserving as much detail as possible. The result was 8x compression of the original pixel space and a model that could run on a consumer GPU.

Second, they introduced text labels into the model training process so that you could prompt the model to produce the image you wanted. Via layers of cross-attention trained on text-image pairs, the models could learn not just how to generate an image, but how to generate a particular image.

These advances were so meaningful that they culminated in the launch of a consumer-facing model that you’ve probably heard of: Stable Diffusion. It was the first of its kind, a diffusion model finally fast and powerful enough to actually ship. And as you can now see, it represented almost 10 years in the making. Research can often feel like it was dropped from the sky, but it’s actually an origin story of how one thing led to another, and at some point you have something that works.

Today’s diffusion models for images and video follow this basic architecture. During pretraining, every training image is paired with a text description (🙏 CLIP). The training objective is given this noisy image and this text embedding, predict the noise. Then, to improve model adherence to prompt, 10-20% of training time the prompt is randomly dropped, forcing the network to also learn random generation (called classifier-free guidance). At inference time, you generate an image with a prompt, an image without a prompt, and take the difference.

Bringing diffusion models to text

Image generation was a natural first beachhead for diffusion models, because images exist in a continuous pixel space that’s easy to add Gaussian noise to (literally, basic addition).

Unfortunately, text does not enjoy this same luxury. Text is discrete. You cannot simply add or subtract a letter to a word and expect that the meaning is “roughly the same.” A pixel plus a small random number is pretty similar to the original pixel; a word plus or minus a letter is nonsense. Just ask my editor.

As such, it took several years of additional research to figure out how to get diffusion models working for text. And this is undoubtedly part of why autoregressive models have become the standard, despite their naive simplicity. All things considered, we would prefer nuanced, efficient methods like diffusion…but when ChatGPT was originally released in 2022, nobody knew how to make them work.

Token masks to approximate Gaussian noise

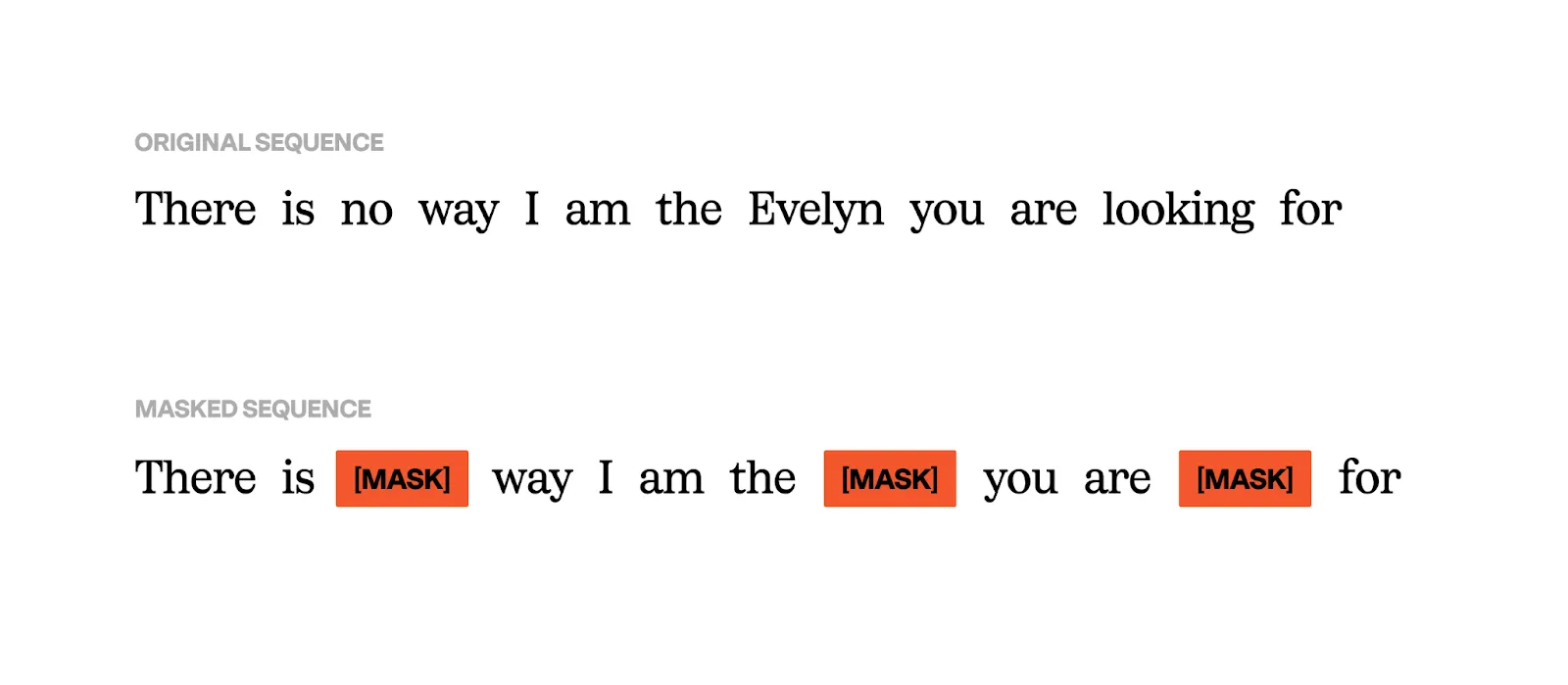

In fact, one year prior to the ChatGPT release (2021) was when early inklings of a solution for text diffusion were forming. In Structured Denoising Diffusion Models in Discrete State-Spaces, Austin et al. proposed an interesting idea: what if you could add noise to discrete spaces through masks? A mask simply obscures an individual token in a sequence, thereby messing with the semantic meaning of the overall sequence.

In my head at least – this explanation is not researcher-approved – I think of this solution as adding noise at the sequence level instead of the token level. Yes, you can’t just change a word, but you can remove a word in a sentence, which changes the semantic meaning of said sentence.

SEDD and a loss function for discrete diffusion

With the mask idea in place, further work could progress on making discrete diffusion possible. One major outstanding issue was figuring out the right loss function. Diffusion models operating in continuous spaces made use of score matching. In a pixel space, you’d train the model to figure out which “direction” to go in to get to a higher fidelity, less noisy pixel. You can think of diffusion models as learning a vector field over the data space at every noise level that points toward higher probability regions. At inference, you're integrating that vector field from pure noise back to the data manifold.

Unfortunately, this doesn’t really work for discrete spaces. If the score points you in the right “nearby direction” then you need the data to live on in a continuous space so that "nearby" and "direction" actually mean something. Discrete tokens don’t live in that space, there is no continuity between “cat” and “dog” (sorry 90’s kids), and you cannot nudge a token slightly in a direction.

Thankfully in 2023, a paper (SEDD) from Stefano’s lab made a breakthrough

by proposing score entropy, a novel loss that naturally extends score matching to discrete spaces, integrates seamlessly to build discrete diffusion models, and significantly boosts performance.

SEDD's contribution was defining a score-like quantity that's native to discrete spaces — the ratio of probabilities between states rather than a gradient. Instead of “which direction increases probability,” you ask “how much more probable is token A than token B at this position.” It won best paper at ICML 2024.

With SEDD, the field was finally in a place where diffusion for text was becoming possible. So Stefano, Aditya (now a professor at UCLA), and Volodymyr (now a professor at Cornell) got together in 2024 to discuss what was next. They believed that dLLMs were the future, so the next natural step was to try and train one at a competitive size to existing LLMs…but how? Despite Stanford being incredibly well funded for a university, the amount of compute required to train a meaningfully-sized dLLM was not within reach (the largest they had gotten to was 1B parameters). So they decided to start a company.

Inception announced their $50M seed in 2025 along with their flagship model, Mercury. Thanks to diffusion, Mercury was up to 10X faster and more efficient than similar quality SOTA LLMs.

When speed is speed but also speed is quality

It’s worth taking a bit of a detour to explain why speed matters so much for LLMs. Yea, 10x speedup is a fancy headline, and as a user you kind of do want your models to feel at least semi-instantaneous. But there is another important consumer of LLM responses, and that is LLMs themselves; particularly in an RL context.

Increasing amounts, perhaps even the majority, of progress in LLMs over the last couple of years is likely due to RL in post-training. Last year I wrote about how RL has unique infrastructure challenges (avoiding idle GPU cycles) since the model is doing training and inference at the same time. Today’s RL training pipelines spend many more times the cycles on inference (generation) as they do on training. If you can flip that equation, thanks to incredible speed from a dLLM, you have completely changed the RL scaling law. It’s a strange thing to say, but in a way speed becomes quality.

Alongside Inception, open source and academic work continues in the dLLM space. Early last year, a working group across several Chinese universities released LLaDA, a dLLM trained from scratch implementing all of the above concepts (masking, etc.). A few months later came Dream 7B, which wasn’t quite trained from scratch but instead was built on an existing AR LLM foundation (similar work was done to create DiffuGPT and DiffuLLaMA). These models are promising starts but undoubtedly not ready or in use for real production use cases.

Taking text diffusion models from academic to production

There is, as many readers know first hand, a long long space between ideas working in papers and ideas working in production. dLLMs had many years of catching up to do with autoregressive models, where thousands of researchers had been pounding away on improving every single part of the training and inference process for 5 years now. In particular there were 3 areas that needed improving before these things were ready for prod:

1) A caching system for diffusion models

Despite autoregressive models being relatively architecturally primitive, they can get pretty fast thanks to a nice property of AR: the ability to cache. Specifically, the KV cache allows you to generate each token once and then store it for efficient retrieval; without it, AR models would need to regenerate the entire token sequence on each generation step and thus be painfully slow.

Because diffusion models have bidirectional attention (i.e. every token can attend to all others in the sequence, you get past and future context), developing an efficient caching system is nontrivial. There are a few ideas in flight:

- Block Diffusion, which Volodymyr was part of, takes pieces from both diffusion and AR models and allows for a KV cache (with the associated efficiencies).

- Fast-dLLM builds on this hybrid AR / diffusion method where blocks of text are cached, but each individual block is generated via diffusion.

- DPad proposes a diffusion scratchpad that restricts attention to a small set of nearby suffix tokens via a sliding window and distance-decay dropout.

- dKV-Cache develops a couple of ideas, primary of which is a delayed caching strategy, wherein only the key and value states of decoded tokens gets cached.

Of these Fast-dLLM is the most widely adopted. A follow paper (Fast-dLLM v2) was released late last year that turns this idea into a full scale model, adapting an existing open source AR LLM into a diffusion model using this block caching strategy.

2) Parallel decoding

In order to have performance gains of AR models in practice, diffusion models must unmask more than one token per step (duh). But tokens are not statistically independent, and thus historically parallel generation of multiple tokens at once has significantly degraded quality.

At NeurIPS in 2025, Aditya’s new group at UCLA proposed a framework for balancing these constraints called APD (adaptive parallel decoding). APD dynamically adjusts the number of tokens sampled in parallel based on generated probabilities from the model and a small “sidecar” autoregressive model that can compute the likelihood of sequences in parallel.

Another approach is DAWN from Luo et al. This idea explicitly builds an inter-token dependency graph that allows the model to select more reliable tokens to unmask in parallel.

3) Reasoning and test time scaling

Pre-trained base models are promising, but not consumer-ready: to actually get used dLLMs will need to be post-trained like their AR counterparts. Do dLLMs respond to techniques developed for AR like SFT and RL? Can dLLMs reason the way SOTA LLMs today can?

Mid-last year Aditya’s lab at UCLA released an interesting paper in this space proposing d1, a framework for bringing the post-training techniques we know and love from AR models to dLLMs. There are two major innovations here:

- They take an existing SFT dataset (S1k) and adapt it to use masking, ergo allowing it to be used as part of dLLM training.

- They develop diffu-GRPO, a policy-gradient RL algorithm applied specifically to masked dLLMs.

Both of these techniques show promising results over a LLaDA base model, independently and in tandem (indicating a synergistic effect). Interestingly, despite the fact that the diffu-GRPO training process used a fixed sequence length of 256 tokens, they observed performance gains at other lengths too (128 and 512), which suggests that the model isn’t just overfitting, it actually learned some sort of general reasoning.

Another important trend that has driven gains for AR LLMs is inference-time scaling. Longer reasoning chains have demonstrated better performance and are now standard in models like Opus 4.6. Can diffusion models benefit from inference-time scaling as well?

Also out of Aditya’s lab, Reflect-DiT explores how to scale inference compute for diffusion models (particularly with images in this context). The idea is that instead of generating N images and picking the best, we can equip diffusion transformers with in-context reflection: the model sees its previous attempts alongside textual feedback and refines its generation accordingly, inspired by DeepSeek-R1's reasoning paradigm.

Inception, Mercury 2, and dLLMs of the future

For now, Mercury 2 is closed source, so we don’t know exactly what’s going on behind the scenes to make it work. To achieve the results it has (and be commercially viable) there is evidently much more going on than just a large pre-trained dLLM base model. It’s possible the company will release more information in the future, but it’s a black box for now.

But the funny thing about companies founded by academics is that for at least the first few years, even black boxes aren’t necessarily that opaque. Publishing leaves a paper trail (literally). And given how prolific the Inception cofounders have been on diffusion topics, I’d venture we can guess that many of their ideas over the last 5 years have probably made it into Mercury: d1, APD, block diffusion, etc.

And then there’s the inference question. Any company providing blazing fast inference must have their kernel writer(s) or at least the labor equivalent. The Mercury paper talks about dynamic batching and paging, which are their own can of worms to figure out for diffusion models. Note that Mercury itself is still a Transformer architecture, despite the fact that it was trained via diffusion.

What we do know is that Mercury 2 is fast as hell and that if there was ever a team that was going to figure out dLLMs, it’s probably this one. You can try Mercury 2 for free here, and get in touch with Sales if you’re interested for your company. And if this work is interesting to you, Inception is hiring.